AI Employees in 2026: 6 Platforms Tested — What’s Real vs. Marketing

The phrase “AI employee” has gone from science fiction to a $2B+ market in under two years. Sintra.ai ranks #1 for the search term. Artisan raised $25M. 11x claims to have built “digital workers” for enterprise sales. Everyone promises AI that works like a team member.

We tested six platforms. Here’s what actually works, what’s still marketing, and what the landscape looks like heading into the second half of 2026.

The Market Right Now

The market isn’t just growing — it’s reshaping the economy around it. CNBC documented the shift in late February 2026: the IGV software ETF dropped nearly 30% in the first two months of the year. Gaming, legal, insurance, trucking, cybersecurity — the sell-off was indiscriminate. As CNBC’s Jar Dosa put it: “The same technology that was supposed to save software companies is now what is threatening to kill them.” When AI agents can do the work that software automates, the software itself becomes the middleman.

But not everyone agrees with the doom narrative. Hours after Nvidia posted earnings that beat expectations, Jensen Huang pushed back: “I think the markets got it wrong.” His argument is counterintuitive — AI agents won’t replace enterprise software, they’ll become its biggest power users. Nvidia itself has 42,000 human employees and is deploying “hundreds of thousands of digital employees” — and the number of software tools being consumed is growing, not shrinking, because agents use more tools than humans do. The truth likely sits between the bear and bull cases: some SaaS categories face real displacement, while systems of record and deep vertical software gain value as agent adoption scales.

The AI employee space has split into three tiers:

- Single-agent platforms — One AI that does one job (sales outreach, customer support, content writing)

- Multi-agent builders — Platforms where you wire together multiple agents into workflows

- Full-department solutions — Pre-built AI teams that replicate an entire business function

Most platforms are in tier 1 or 2. The difference matters more than marketing copy suggests.

The Reality Check: What the Data Actually Says

Before comparing platforms, it’s worth looking at what independent research tells us — because the gap between marketing claims and measured performance is wider than most people realize.

Companies Are Cutting Based on Potential, Not Performance

Harvard Business Review published what might be the most important AI labor study of 2026: “Companies Are Laying Off Workers Because of AI’s Potential, Not Its Performance.”

The key finding: the best-performing AI system tested completed only 2.5% of real projects successfully. Companies making headcount cuts — Block (40%), Amazon (16,000), UPS (20,000), Citigroup (20,000) — are doing so based on what AI might do, not what it’s proven to do.

Goldman Sachs found only 11% of companies actually cut employees due to AI. 47% used AI for productivity gains without cutting headcount. And 55% of employers who made AI-attributed layoffs already regret the decision.

The Klarna Reversal

Klarna became the AI employee poster child — Siemiatkowski cut from 7,000 to under 3,000 employees, claiming AI handled 2-3 million conversations monthly (equivalent to 700+ agents) and saved $40M/year.

Then Klarna started hiring human customer service agents back. The AI handled volume, but customers wanted humans available. The cost savings narrative collapsed when customer satisfaction metrics were included.

This isn’t a failure story — it’s a calibration story. And it’s about to become common.

Gartner’s Prediction

Gartner predicts that half of companies that cut customer service staff due to AI will rehire by 2027. The pattern: aggressive cuts → quality problems → rehiring. Klarna was first. They won’t be last.

What This Means

None of this means AI employees don’t work. They do — in specific domains. But the market narrative is inflated well beyond the evidence. The platforms worth investing in are the ones that are honest about scope and deliver in narrow, measurable lanes.

Dominic Vitucci (Onshore) offers the clearest counterpoint in a recent YC interview: his AI-native accounting firm targets $100M revenue with ~75 employees — over $1M per head, compared to roughly $100-150K at traditional Big Four firms. The key? He started with one narrow wedge (R&D tax credits), proved it worked, and expanded from customer pull. The pattern holds: AI employees succeed when scoped to specific, measurable outcomes — not when promised as universal replacements.

The 6 Platforms We Tested

1. Sintra.ai — The Volume Play

What they claim: 90+ AI employees across every business function.

What we found: Sintra dominates SEO for “AI employee” (position #1, 1,900 monthly searches) and has built an impressive category page for every department. The agents are essentially pre-configured prompt chains — you describe a task, the agent executes it.

Strengths:

- $97/month for unlimited agents is aggressive pricing

- Covers nearly every business function — marketing, sales, HR, finance, ops

- Clean UX, fast onboarding — you’re using agents within minutes

Limitations:

- Agents are single-turn — they execute a task and return a result, but don’t maintain context across sessions

- No shared workspace between agents — your “Marketing Manager” doesn’t know what your “SEO Specialist” found

- No real data integration — agents can’t connect to your actual tools (CRM, analytics, email)

Best for: Quick, one-off tasks. Writing an email, brainstorming ideas, generating a report template. The McDonald’s of AI employees — fast, cheap, predictable.

2. Lindy.ai — The Workflow Builder

What they claim: AI employees that automate entire workflows, not just single tasks.

What we found: Lindy is the most technically impressive pure-play platform. Their workflow builder lets you create multi-step automations where AI agents hand off work to each other.

Strengths:

- Visual workflow builder is genuinely powerful

- Triggers (email received, calendar event, form submitted) make agents proactive, not just reactive

- Memory across conversations — agents learn your preferences

- Free tier lets you test before committing

Limitations:

- Building complex workflows requires significant setup time

- The “employee” metaphor breaks down — these are really sophisticated automations

- Limited to what their integration library supports

Best for: Founders who think in systems. If you’d build a Zapier automation, you’d build a Lindy workflow — but with AI making the decisions at each step.

3. Artisan — The SDR Replacement

What they claim: Ava, an AI Sales Development Rep that finds leads, researches them, and sends personalized outreach at scale.

What we found: Artisan is laser-focused on outbound sales. Ava is one agent doing one job — and doing it reasonably well. The pitch is simple: replace your SDR team.

Strengths:

- Purpose-built for sales outreach — every feature serves that use case

- Built-in lead database and enrichment

- Personalization goes beyond “Hi {first_name}” — researches LinkedIn, company news, recent posts

Limitations:

- One agent, one function — no broader business capability

- Enterprise pricing (~$1,000+/month) puts it out of reach for SMBs

- Still limited by the fundamental problem of cold outreach — even personalized AI emails have low response rates

Best for: Mid-market sales teams with budget who want to scale outbound without hiring more SDRs.

4. 11x.ai — The Enterprise Digital Worker

What they claim: AI digital workers (Alice for SDR, Jordan for phone calls) that integrate into enterprise workflows.

What we found: 11x targets enterprise buyers with a polished product and enterprise-grade integrations. Alice (SDR) and Jordan (phone) are their two “workers.”

Strengths:

- Deep CRM integrations (Salesforce, HubSpot)

- Enterprise compliance and security features

- Phone capabilities (Jordan) are genuinely novel — AI that makes and takes calls

Limitations:

- Only two workers — very narrow scope

- Enterprise pricing and sales process

- The “digital worker” framing overpromises relative to what two sales-focused agents deliver

Best for: Enterprise sales teams already using Salesforce/HubSpot who want AI-augmented outbound.

5. Relevance AI — The Builder’s Platform

What they claim: Build, deploy, and manage AI agents for any business process.

What we found: Relevance AI is the most developer-oriented platform. It’s closer to a low-code AI agent framework than a pre-built employee.

Strengths:

- Highly customizable — you build exactly the agent you need

- Strong integration library (APIs, databases, SaaS tools)

- Multi-agent orchestration is possible (agents triggering other agents)

Limitations:

- Requires technical skill to set up effectively

- “Agent” not “employee” — you’re building tools, not hiring team members

- The gap between “possible” and “production-ready” is significant

Best for: Technical teams that want to build custom AI workflows without starting from scratch.

6. Sierra — The Customer Service Specialist

What they claim: AI agents for customer experience — handling support, returns, upgrades, and complex customer interactions.

What we found: Sierra is perhaps the most honest about what AI employees can do today. They focus on customer service — a domain where AI agents genuinely outperform human teams on speed and consistency.

Strengths:

- Deep vertical focus on customer experience

- Handles complex multi-step customer interactions (not just FAQ lookup)

- Enterprise customers include major brands

- Founded by Bret Taylor (ex-Salesforce CEO) — serious technical pedigree

Limitations:

- Customer service only — no broader business capability

- Pricing not publicly available (enterprise sales process)

- You’re buying one excellent employee, not a team

Best for: Companies with high support volume who want to dramatically reduce response times and headcount in customer service.

The Economics: Real Costs vs. Marketing Claims

The pitch: “AI employees cost 95% less than humans.” The reality is more nuanced.

Direct Cost Comparison

| Role | Human (Fully Loaded) | AI Agent | Savings |

|---|---|---|---|

| Customer service agent | $55K-$75K/yr | $5K-$15K/yr | 73-93% |

| Junior developer | $80K-$120K/yr | $240-$6K/yr | 95-99% |

| SEO analyst | $65K-$90K/yr | $3K-$8K/yr | 88-96% |

| SDR/BDR | $60K-$85K/yr | $10K-$25K/yr | 71-85% |

| Data analyst | $75K-$110K/yr | $5K-$12K/yr | 85-95% |

The Hidden Cost Nobody Mentions

30-50% of total AI agent investment goes to human supervision.

Basic implementations need 0.5-1 FTE for oversight. Complex enterprise setups need 2-3 FTEs for supervision, quality control, and error correction. An AI employee saving $60,000/year but requiring $30,000 in human oversight saves $30,000 — still good, but not the 95% reduction from the pitch deck.

The Consumption Model Shift

The most important economic shift: AI employees turn fixed costs into variable costs.

- Idle AI employee costs ~$0 (no salary to pay when there’s no work)

- Spike months scale instantly (no hiring, onboarding, or training lag)

- Quality scales with the model (upgrade the underlying model, every AI employee improves)

The right comparison isn’t “AI vs. human cost.” It’s “fixed payroll vs. variable operations.” For businesses with variable workloads, this changes everything.

Where AI Employees Actually Work (and Where They Don’t)

After testing platforms and running our own offices, a clear pattern emerges. AI employees work in domains where: tasks are well-defined, data sources are structured, feedback loops are fast, and errors are recoverable.

Production-Ready Today

| Domain | Why It Works | Evidence |

|---|---|---|

| Customer service (Tier 1) | Structured queries, known answers | Klarna (volume), Sierra, Bland AI |

| Code writing | Verifiable outputs (tests pass/fail) | Cursor ($100M ARR), Devin, Claude Code |

| SEO analysis | API-driven data, recurring reports | TeamDay SEO Office (4 MCPs, 7 endpoints) |

| Content drafting | Human reviews final output | TeamDay Content Studio |

| Email marketing | Measurable (open rates, clicks) | TeamDay Newsletter Studio (Mailgun) |

| Data analysis | SQL is verifiable, charts are visual | TeamDay Data Analyst |

| Sales outreach | Template-based, A/B testable | 11x ($10M ARR), Artisan |

| Phone calls | Scripted conversations | Bland AI ($299+/mo) |

| Supply chain optimization | Rule-based workflows, measurable outcomes | Ex-Tesla team (McNeill ventures) |

The supply chain case is especially telling. Jon McNeill (former Tesla President) described how his team rebuilt Tesla’s ML supply chain platform with agentic AI: agents that understand a client’s complex work rules in hours and design optimized workflows in days — replacing 9-12 month ERP implementations. The key ingredient isn’t the AI alone; it’s domain experts who know which rules matter, wielding agents as an “exoskeleton” of capability. This expert-plus-agent pattern is emerging as the most reliable model for enterprise AI employees.

Not Ready (Regardless of Marketing Claims)

| Domain | Why It Fails | Current State |

|---|---|---|

| Strategic planning | Requires creative judgment | Karpathy: “ideas are pretty bad” |

| Complex negotiations | Nuance, relationship context | No credible deployment |

| Original research | Needs methodology discipline | Agents run spurious experiments |

| People management | Emotional intelligence, trust | Not attempted |

| Crisis response | Novel situations, high stakes | Too risky for autonomous agents |

What We Learned: The Single-Agent Ceiling

Every platform we tested hits the same wall: single agents working in isolation can’t replicate how a real team operates.

A human marketing team works because the SEO specialist sees the content writer’s output, the social media manager knows what campaigns are running, and the analytics person feeds insights back to everyone. They share context. They coordinate.

Most AI employee platforms give you 10 agents that each live in their own silo. Your “AI SEO Specialist” has never seen what your “AI Content Writer” produced. Your “AI Data Analyst” can’t access the dashboards your “AI Marketing Manager” needs.

This is the fundamental architectural problem.

The Coordination Gap

Jerry Murdock, managing director at Insight Partners, frames this clearly:

“An autonomous agent becomes an employee. You give it credentials. You give it identity. And then it’s up to the agent to make the decision.”

But credentials and identity aren’t enough. Real employees have:

- Shared context — access to the same documents, dashboards, and communication channels

- Handoffs — the ability to pass work to a colleague with full context

- Accumulated knowledge — memory of what’s been tried, what worked, what didn’t

- Tool access — connection to the actual systems where work happens (not just AI-generated text)

Most platforms deliver the identity. Few deliver the infrastructure.

Where It’s Actually Working

Klarna: The Full Story

Sebastian Siemiatkowski cut Klarna from 7,000 to under 3,000 employees while expanding into new product lines:

“We’ve gone from 7,000 people, we’re now below 3,000. We’ve shrank 50%. And I didn’t ask for a single dime to do all this.”

But as we covered above, Klarna later started hiring human agents back. The full story isn’t “AI replaced 700 agents.” It’s “AI handled volume, but customers wanted humans available.” The equilibrium is hybrid — and Klarna found it the hard way.

The real lesson: Klarna didn’t buy an “AI employee platform.” They built custom AI systems integrated into their existing infrastructure. The companies seeing real results are building, not buying off-the-shelf agents. And they’re calibrating the human-AI ratio through real customer feedback, not marketing projections.

Solo Founders: The Extreme Case

Ben Broca reached $1.8M ARR with Polsia as a solo founder, managing 2,000+ autonomous companies — up from $1M ARR and 1,100 companies just weeks earlier. His approach: Opus 4.6 for strategic decisions, specialized agents for execution, everything running through his own orchestration layer. In a recent interview with Andreas Klinger, Broca revealed the design philosophy: “Treat the user as an investor. You have passive investors and active investors. If it’s a passive investor, they don’t reply to your email. They ghost you. But it doesn’t mean they don’t care.” The AI operates as CEO; the human provides direction — or doesn’t.

The lesson isn’t “buy an AI employee platform.” It’s that the right AI tooling lets one person operate like a team — if the infrastructure connects everything together.

The Practitioner Case: $5,400 AI Agent Deal

Not every AI employee deployment is a Silicon Valley story. An independent developer built a WhatsApp-based AI agent for a criminal defense lawyer in Australia. The agent transcribes audio messages from potential clients, responds intelligently 24/7, creates geographic heat maps for targeted ad campaigns, filters high-priority cases into Salesforce, and sends invoices automatically. Development: five weeks. Testing: two weeks. Contract value: $5,400. The agent replaces multiple staff members who were handling lead qualification manually — and losing potential clients because they couldn’t respond fast enough.

This is the pattern emerging in production: AI employees work best when they handle a specific, well-defined workflow with clear inputs (WhatsApp messages) and outputs (CRM records, emails) — not when they’re generic “do everything” assistants.

The Multi-Agent Proof: 16 Agents, One Compiler

Anthropic researcher Nicholas Carlini demonstrated what coordinated AI employees can build: 16 Claude agents working in parallel for two weeks produced a 100,000-line C compiler from scratch. Cost: $20,000. The compiler builds the Linux kernel, PostgreSQL, FFmpeg, and Redis. The coordination mechanism was simple — git-based task locking, automated verification against GCC, no central orchestrator. This is the template for how AI departments will operate: parallel specialists with automated quality checks, scaling by adding more agents rather than more human oversight.

The Team Approach: Why Departments Beat Individuals

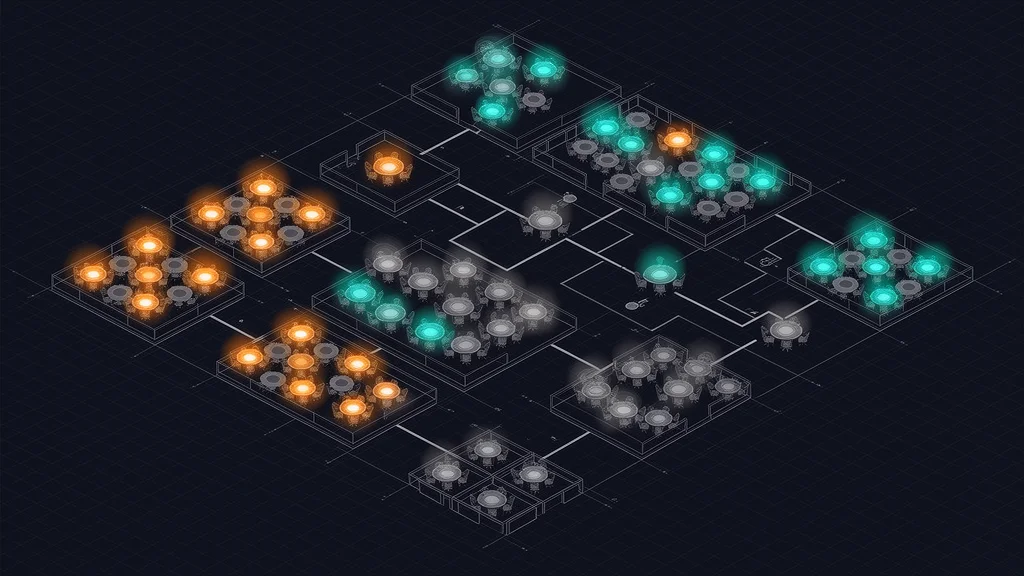

The platforms that work best in 2026 aren’t selling individual AI employees. They’re building AI departments — coordinated groups of agents that share context, hand off work, and operate on real data.

This is what we built with TeamDay’s AI agents. Each agent is a pre-configured department:

| Agent | What It Does | Real Data |

|---|---|---|

| SEO Manager | Pulls from Ahrefs, Search Console, SE Ranking. Runs audits, tracks rankings, finds opportunities. | 7 dashboard endpoints, 3 autonomous missions |

| Content Creator | Three agents collaborate: writer, image creator, translator. Produces blog posts in 10+ languages. | Real blog output, SEO-optimized |

| Video Producer | Script → AI visuals → narration → assembly → YouTube upload. Full production pipeline. | 4 AI models, scene-by-scene production |

| Data Analyst | Ask questions in English, get SQL queries, insights, dashboards, and scheduled reports. | BigQuery, PostgreSQL, MySQL |

| Newsletter Manager | Subscriber management, campaign creation, delivery via Mailgun, performance tracking. | Real send/open/click data |

| Visual Designer | Generate blog covers, social posts, ad creatives from text prompts. Four AI models. | FAL AI, Gemini, OpenAI, Grok |

| WordPress Manager | Content publishing, plugin management, performance monitoring for WordPress sites. | Works with WP.com and self-hosted |

The difference: these aren’t chatbots with job titles. Each team has a real dashboard connected to real data sources via MCP integrations. The SEO Office doesn’t guess about your rankings — it pulls live data from Ahrefs and Search Console. The Email Marketing team doesn’t draft emails in isolation — it manages real subscriber lists and tracks actual delivery metrics.

The Compound Effect

Here’s what thin wrappers can’t replicate — the output of one office feeds the input of another:

SEO Office → finds keyword opportunity (position 8, high volume)

↓

Content Studio → drafts blog post targeting that keyword

↓

Design Studio → generates cover image and social graphics

↓

Newsletter Studio → distributes to subscriber list

↓

UA Creative Studio → generates ad creatives, deploys via Meta Ads

↓

Data Analyst → measures performance, reports ROI

↓

SEO Office → tracks ranking improvement, finds next opportunityEach office is useful alone. Together, they form a flywheel. This is the compound advantage that single-agent platforms structurally cannot offer.

What We Learned Running These (The Hard Way)

Day-1 value or nothing. Early offices had scheduled missions but no initialRun. Users saw an empty dashboard and a promise of “results next Monday.” Nobody came back. Now every mission runs immediately on activation.

Progressive access beats auto-install. Users with no Ahrefs account saw error states when we auto-configured everything. Now each office starts empty and unlocks dashboard sections as you connect data sources. This mirrors how real employees onboard — gradually, not all at once.

Chat + data > dashboard alone. The dashboard shows charts. The chat answers “why.” When the SEO Office generates a weekly report, you can ask “why did our ranking for ‘ai agents’ drop?” and get a specific answer grounded in Ahrefs data — not a generic suggestion.

AI employees are staff, not leadership. This matches Karpathy’s finding exactly. Our offices are exceptional at running scheduled analyses, generating reports from real data, and monitoring metrics. They are not good at deciding which strategy to pursue or evaluating whether their own output is good enough. Deploy them for execution, not strategy.

The difference between an AI employee and an AI chatbot isn’t intelligence — it’s infrastructure. Real data connections, persistent workspaces, autonomous schedules, and cross-agent coordination.

How to Choose

If you want quick, cheap, single-task execution: Sintra ($97/month) gives you the most agents for the least money. Think of it as a toolkit, not a team.

If you want to build custom workflows: Lindy (free tier available) is the most flexible builder. You’ll invest time upfront but get exactly what you need.

If you need enterprise sales automation: Artisan or 11x, depending on whether you need outbound emails (Artisan) or phone + email (11x). Budget $1,000+/month.

If you need customer service at scale: Sierra is the strongest vertical play. Enterprise pricing, enterprise results.

If you want AI departments with real data integration: TeamDay gives you pre-built AI employees that connect to your actual tools — not just AI generating text, but AI working with your real analytics, your real email lists, your real content pipeline. Free to start, BYOK pricing.

What’s Coming Next

The AI employee market in 2026 is where SaaS was in 2008 — early, fragmented, and about to consolidate. Three predictions:

-

Single-agent platforms will either add coordination or fade. The market is moving from “AI that does a task” to “AI that runs a function.” Platforms without multi-agent infrastructure will struggle.

-

Data integration becomes the moat. The agents that win will be the ones connected to real business data — not the ones with the best chat interface. MCP (Model Context Protocol) is emerging as the standard for this, and platforms that adopt it early will have an advantage.

-

Consumption-based pricing replaces seat-based licensing. When agents are the users, per-seat pricing makes no sense. The shift to usage-based models (pay for what the agent actually does) will reshape the economics of the entire category.

-

Regulation will shape who wins. The first major AI safety law — New York’s RAISE Act — now requires the five largest AI companies to publish safety plans and report critical incidents. A $125M super PAC is fighting to block further regulation. But as Alex Bores, the law’s author, argues: “Putting a seat belt on the Lamborghini doesn’t really slow down the Lamborghini.” Platforms that build trustworthy AI deployments now will have an advantage when the regulatory floor rises.

The question isn’t whether AI employees will work. They already do, for specific tasks. The question is whether your AI employees can work together — sharing context, coordinating handoffs, and operating on real data. That’s the ceiling most platforms haven’t broken through yet.

Want to see AI employees in action? Hire your first AI employee — free to start, no credit card required.